Even if you have spent long hours adding new content to your website, your visitors won’t see the results until the new pages are indexed by Google. Unfortunately, you might discover that although quite some time has passed, your website still hasn’t been indexed.

There are multiple reasons why it could take a lot of time for Google to index the new pages ⏳ which is why finding the culprit could be quite time-consuming.

However, it doesn’t mean that the only way of dealing with this issue is to hope that one day Googlebot will reach the new pages. Instead, in a moment we’ll show you how to ensure that the new content on your website is indexed almost instantaneously.

Check Robots.txt File First

Before you start wondering what could be causing Google’s crawlers to be so slow, we recommend checking robots.txt file first. But wait, what exactly is a robots.txt file?

Robots.txt functions as a set of instructions for crawlers, where you can specify which pages should and which should not be crawled. You probably don’t need all of the pages on your website to be crawled, which would help you avoid exceeding the crawl budget.

According to Matt Diggity of The Search Initiative, although it doesn’t happen often, you could have mistakenly marked some of your new pages as off-limits for Google crawlers.

That’s why before you proceed to the next steps, make sure that your own actions are not what is stopping the page from being indexed.

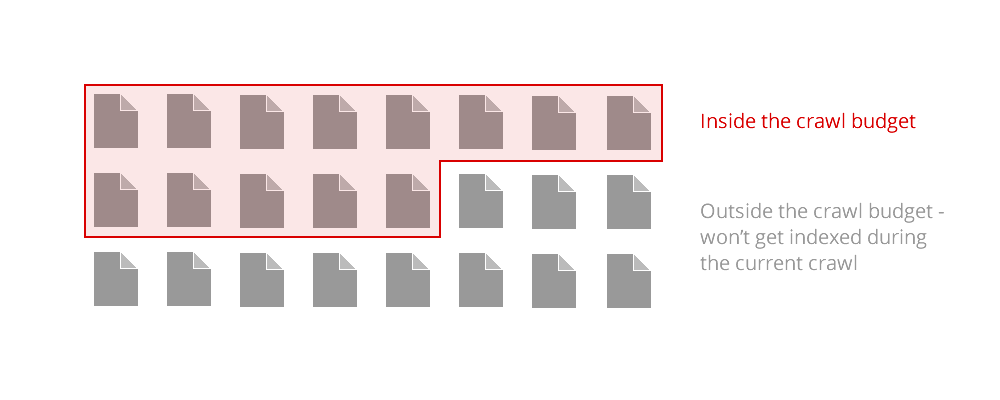

Don’t Waste Your Crawl Budget

If you want your new pages to reach high rankings and bring new visitors to your website, they need to be indexed first. Unfortunately, if you are creating a lot of new content for your website at a fast pace, a lot of it might not get indexed as you have exceeded the crawl budget.

If you are running a small website, then in most cases, you won’t need to worry about it, but still, it’s best to avoid issues such as orphaned pages, duplicate content, or infinite spaces that could prevent useful pages on your website from being indexed by Google:

Avoid Orphaned Pages

If you want the new pages on your website to be crawled by Google, you need to include internal links that would point toward them. Google’s crawlers act similarly to a human visitor – if they cannot get to a particular page from any other place on your website, they won’t crawl or index it: that is what’s called an “orphaned page”.

And that is also why you should ensure that you’ve added some internal links that would increase the chances of Google not missing them!

Remember that not all pages on your website are equal – depending on the quality of the backlinks, the new pages will receive a different amount of “strength.” That’s why if you want Google to pay extra attention to some of the pages, make sure to add internal linking pointing to them from the most popular ones on your website.

However, some of the orphaned pages might lack any internal links pointing to them for a good reason, as they are no longer useful. In this case, we recommend either adding the noindex tag, or just deleting them altogether.

What Is DFI?

Adding internal links might not be enough if crawlers have to go through several other pages first to reach the new content. Again, it isn’t too different to what a human would do in the same situation – if they cannot find the information they need within a few clicks, they might not even get to the relevant page.

That’s why DFI (which stands for Distance From Index) is another factor that you should consider 📐

Solving this issue is quite easy – all you have to do is to add the internal linking so that the new content can be accessed easily from the homepage. All new pages with content that you think users might find especially valuable shouldn’t require more than 3 clicks from the homepage.

Focus on Valuable Content

The pages that Google deems to be valuable are going to be indexed first. But how do you increase the “value” of the page? You certainly can’t ask the Googlebot direclty…

Well, Google’s algorithm pays great attention to the perceived authority of the website. If there are external links from various corners of the internet pointing to a particular page on your website, it will be a sign for the crawlers that the content might be valuable, and its indexability and crawlability will increase.

However, outreach is not the only method to show Google’s algorithm that users might find your newly added content helpful. Try to avoid too many pages with thin content – i.e. with less than 500 words – if it’s possible, expand their word count, e.g. by answering commonly asked questions related to the content on the page.

Alternatively, you could just merge several pages into a single one. On top of that, remember to add meta tags, and avoid duplicating titles on different pages.

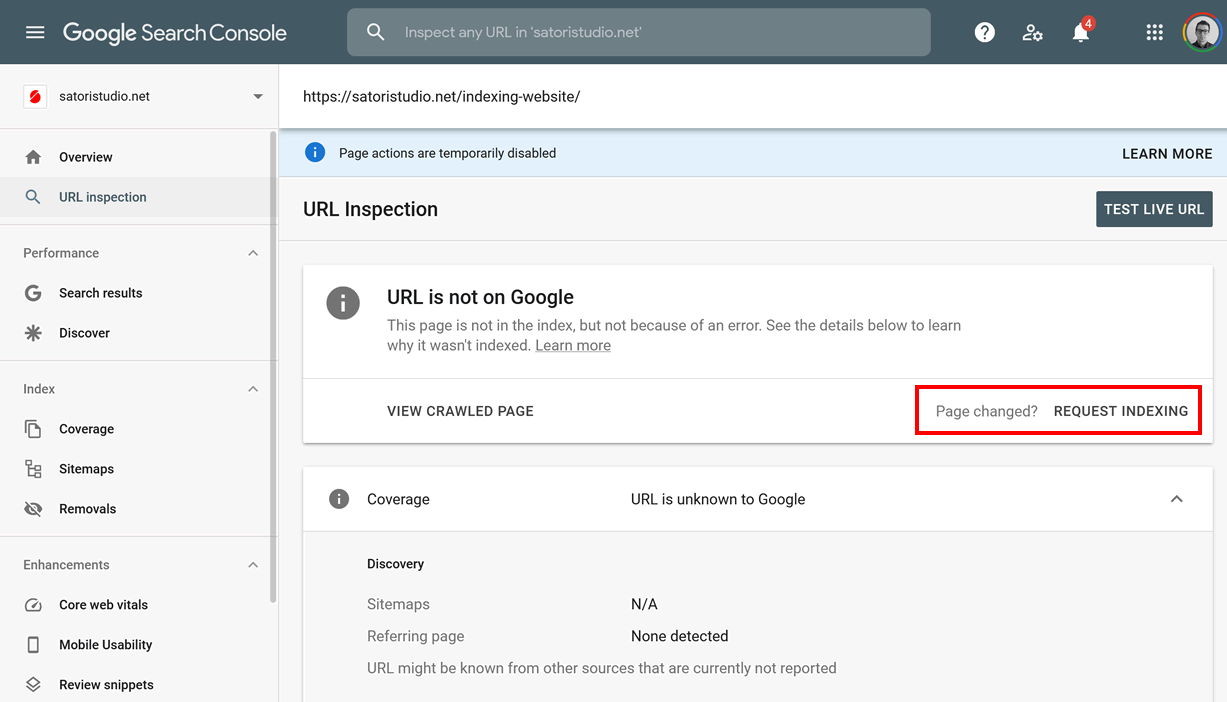

Request Indexing

Although it isn’t by any means a surefire method to get your page indexed, you could request Google to index it. How? First, you need to visit Google Search Console. Paste the URL of the new page into the URL Inspection Tool, and then choose “Request Indexing”:

Bear in mind: regardless of all the steps you’ve taken, it still won’t happen instantaneously; Google might even decide to skip indexing your website if it encounters any of the previously mentioned issues.

Conclusion

Whether you are running an e-commerce website, an online bookstore, or a local bakery – you need a robust online presence to compete in 2026.

However, even if you spend long hours writing helpful articles with the intention of attracting new visitors and customers to your website, your efforts might prove fruitless for a simple reason: your pages not being indexed by Google.

As we’ve showed above, there are certain factors that affect the speed at which crawlers visit your website. Following the tips mentioned in this article will help you ensure that your awesome content gets indexed as quickly as possible.

Hello, on 27 November 2020 i cant request indexing my url.

why this happened? is there any solutions to index my url?

Great article – except Google disabled indexing for now. The feature I needed most.